AI programmatic SEO workflows are automated systems that use databases and large language models to generate thousands of search-optimized pages, each targeting specific long-tail keywords—allowing a single website to capture massive organic traffic by creating unique, valuable content for every variation of a search query (like individual pages for “best CRM for real estate agents” + “best CRM for dentists” + 10,000 more industry combinations). In March 2026, these workflows represent the most scalable SEO strategy, with sites generating 100,000+ monthly visitors from programmatic pages that would take years to write manually.

The Delivery: Why One-by-One Blogging Is Dead

Writing blog posts one at a time is the old way. You research, write, edit, publish. One article takes 4-8 hours. At that pace, reaching 1,000 articles takes 2-3 years of full-time work.

The new meta: Find a dataset with 1,000-50,000 rows (all dog breeds, every software tool in a category, all cities in the US, every college major), then use AI to automatically generate a unique, highly valuable page for every single row in that database.

Real-world examples already dominating search results:

- Nomad List: 1,200+ city pages (each city gets unique cost of living, weather, coworking spaces data)

- Zillow: Millions of pages (every address combination generates unique property page)

- Yelp: Millions of pages (every business × city combination)

- Product Hunt: 100,000+ tool pages (each product gets unique review aggregation page)

These aren’t traditional ‘blog posts.’ They are the result of dataset to website automation—dynamically generated from structured data, each targeting ultra-specific long-tail keywords with high search intent.

Why this works in 2026:

- Long-tail dominates: 70% of searches are unique queries (not high-volume generic keywords)

- Google rewards specificity: “Best CRM for dentists” ranks better than “Best CRM” for dental practice searches

- Scale beats perfection: 1,000 pages at 7/10 quality capture more traffic than 10 pages at 10/10 quality

- AI makes it feasible: What required a development team in 2020 now requires Make.com + ChatGPT API

This is a perfect real-world application for an automated website build using basic Python and SQL skills, though tools like Make.com or Airtable can achieve the same results with zero code SEO strategy approaches. Either path works—technical scripts or a zero code SEO strategy.

Once you have the traffic, you monetize it with AI digital product workflows, affiliate links, ads, or SaaS products. Traffic is the foundation—programmatic SEO builds that foundation at scale.

Let’s build your 1,000-page empire.

Level 1: Finding the Golden Dataset (The Database)

The foundation of AI programmatic SEO workflows is the dataset. Every row becomes a page. The richer your data, the better your pages.

What Makes a Good Programmatic Dataset for AI programmatic SEO workflows??

Three critical criteria:

- Search volume exists: Each variation has 10-100+ monthly searches

- Clear search intent: Users searching want specific information (not just browsing)

- Scalable structure: 500-50,000 rows (too few = not worth effort, too many = indexing issues)

Bad dataset examples:

- “All words in the English dictionary” (no search intent)

- “Every number 1-1,000,000” (no value proposition)

- “Random combinations of adjectives + nouns” (spam)

Good dataset examples:

- All US cities + “best restaurants in [city]”

- All software categories + “best [category] tools for [industry]”

- All dog breeds + “is [breed] good for apartments?”

- All college majors + “[major] career paths and salary data”

- All medical conditions + “[condition] symptoms and treatment options”

Where to Find Datasets

Public databases (free, high-quality):

- Kaggle: 50,000+ datasets on everything (startups, cities, products, demographics)

- Data.gov: US government data (cities, schools, health, transportation)

- Wikipedia Lists: Structured lists (countries, languages, historical events)

- Google Dataset Search: Meta-search for datasets across the web

- APIs: Many services offer bulk data exports (Yelp, Foursquare, government APIs)

Building custom datasets:

Option A: Web scraping (technical, requires Python/JavaScript)

- Scrape directory sites, review platforms, or listings

- Tools: BeautifulSoup (Python), Puppeteer (JavaScript)

- Legal note: Check robots.txt and terms of service—respect scraping policies

Option B: AI-generated datasets (creative approach)

Use this ChatGPT prompt to generate mock datasets for testing:

You are a Data Architect specializing in programmatic SEO datasets.

OBJECTIVE: Generate a structured dataset for programmatic SEO content.

DATASET SPECIFICATIONS:

- Topic/Industry: [e.g., "Project management software"]

- Number of rows: [e.g., 50-100]

- Target search intent: [e.g., "best [tool] for [industry]"]

REQUIRED COLUMNS:

1. Primary identifier (unique name/ID)

2. 3-5 attributes that differentiate entries (price, features, target audience, etc.)

3. Quantifiable metrics where possible (user count, rating, year founded)

4. Geographic data if relevant (country, region, city)

5. Category/subcategory for filtering

OUTPUT FORMAT:

Provide as CSV-formatted data with headers.

Ensure variety—avoid repetitive patterns that look AI-generated.

Include realistic data (not obvious placeholders like "Tool1", "Tool2").

EXAMPLE:

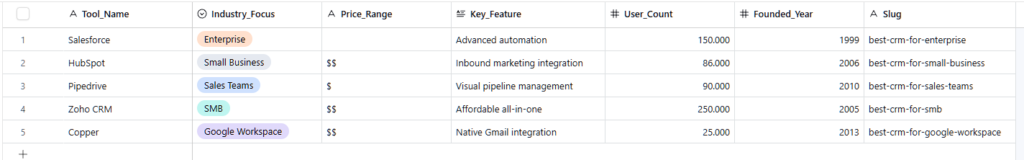

For "Best CRM software for different industries":

- Tool Name, Industry Focus, Price Range, Key Feature, User Count, Founded Year

Generate dataset now.Example output:

csv

Tool_Name,Industry_Focus,Price_Range,Key_Feature,User_Count,Founded_Year

Salesforce,Enterprise,$$$$,Advanced automation,150000,1999

HubSpot,Small Business,$$,Inbound marketing integration,86000,2006

Pipedrive,Sales Teams,$,Visual pipeline management,90000,2010

Zoho CRM,SMB,$$,Affordable all-in-one,250000,2005

Copper,Google Workspace users,$$,Native Gmail integration,25000,2013

...

You now have 50-100 rows. Each row will become a unique page: “Best CRM for Enterprise,” “Best CRM for Small Business,” “Best CRM for Sales Teams,” etc.

Dataset Quality Check

Before proceeding, validate your dataset:

☐ Uniqueness test: Each row has distinct, valuable differentiators (not just name changes)

☐ Search volume validation: Sample 10 random rows, verify search volume exists (use Google Keyword Planner or Ahrefs)

☐ Value proposition: Could a human reasonably want this specific information? (passes “would I click this?” test)

☐ Scalability: 500-10,000 rows ideal (fewer = limited traffic, more = potential spam signals)

If dataset passes all checks, you’re ready to template your AI programmatic SEO workflows.

Level 2: The Template Engine (The Skeleton)

In AI programmatic SEO workflows, every programmatic page follows the same structure—only the data changes. This is the template that gets populated 1,000 times.

The Dynamic Page Structure

Core components every template needs:

- Dynamic Title Tag:

<title>Best {{Category}} for {{Industry}} in 2026 | YourBrand</title> - Dynamic H1:

# Best {{Category}} for {{Industry}}: Complete 2026 Guide - Dynamic Introduction: Paragraph using {{variables}} from dataset

- Data-driven sections: Lists, comparisons, tables populated from dataset columns

- Dynamic metadata: Unique meta descriptions, Open Graph tags, schema markup

Example Template (Markdown/HTML hybrid)

markdown

---

title: "Best {{Tool_Category}} for {{Industry}}: Top {{Tool_Count}} Options in 2026"

meta_description: "Discover the best {{Tool_Category}} tools specifically designed for {{Industry}} professionals. Compare features, pricing, and user reviews."

slug: "/best-{{tool_category_slug}}-for-{{industry_slug}}"

---

# Best {{Tool_Category}} for {{Industry}}: Complete 2026 Guide

{{Industry}} professionals need specialized {{Tool_Category}} solutions that understand the unique challenges of {{industry_specific_challenge}}. This guide compares the top {{Tool_Count}} tools based on features, pricing, and real user feedback.

## Why {{Industry}} Needs Specialized {{Tool_Category}}

{{industry_pain_point_paragraph}}

## Top {{Tool_Count}} {{Tool_Category}} Tools for {{Industry}}

{{#each tools}}

### {{rank}}. {{tool_name}} — Best for {{use_case}}

**Price:** {{price_range}}

**Key Features:**

- {{feature_1}}

- {{feature_2}}

- {{feature_3}}

**User Rating:** {{rating}}/5 ({{review_count}} reviews)

**Why it's great for {{Industry}}:** {{industry_specific_benefit}}

{{/each}}

## How to Choose the Right {{Tool_Category}} for Your {{Industry}} Business

{{decision_framework_paragraph}}

## Frequently Asked Questions

**Q: What's the average cost of {{Tool_Category}} for {{Industry}}?**

A: {{price_analysis}}

**Q: Do I need {{specific_feature}} for {{Industry}} use?**

A: {{feature_importance_explanation}}

---

*Last updated: {{current_date}} | {{tool_count}} tools analyzed*

What gets replaced:

{{Tool_Category}}: CRM, Project Management, Accounting Software, etc.{{Industry}}: Real Estate, Healthcare, Construction, Legal, etc.{{tool_count}}: Number from dataset{{#each tools}}: Loops through dataset rows{{industry_specific_challenge}}: Generated by AI based on industry

Critical principle: Every {{variable}} must pull from your dataset or be AI-generated uniquely for that combination. Never use identical paragraphs across pages—Google’s Helpful Content Update penalizes this.

Level 3: The AI Content Generator (Bulk Writing)

To successfully scale your AI programmatic SEO workflows, templates provide structure, but you need unique content for each page to avoid Google’s duplicate content penalties. This is where bulk page generation with AI happens.

The Bulk Content Generation Prompt

Feed this to ChatGPT API, Claude API, or batch processing tools:

You are a Content Generator for programmatic SEO pages. Your goal is to create unique, valuable, non-generic content for each page variation.

INPUT DATA:

- Page topic: {{topic}}

- Primary keyword: {{keyword}}

- Data row: {{dataset_row}}

CONTENT REQUIREMENTS:

1. INTRODUCTION (150-200 words):

- Open with specific pain point relevant to this exact combination

- Mention 2-3 concrete examples or statistics

- Explain why this specific combination matters (not generic platitudes)

- Natural incorporation of primary keyword

2. VALUE-ADD SECTIONS:

- Don't just repeat dataset info—add insights

- Include "what to look for" guidance specific to this use case

- Explain trade-offs between options

- Provide decision framework

3. UNIQUENESS REQUIREMENTS:

- Use synonyms and varied sentence structure (don't template obvious patterns)

- Include 1-2 specific examples or case studies relevant to this combination

- Vary paragraph length (avoid robotic uniformity)

- Use conversational transitions, not formulaic connectors

4. AVOID:

- Generic statements that work for any page ("choosing the right tool is important")

- Obvious AI patterns ("in today's digital landscape," "it's worth noting that")

- Repetitive structure across pages (Google detects this)

- Keyword stuffing or unnatural phrasing

OUTPUT FORMAT:

Provide content in Markdown format with clearly marked sections.

Content should pass as written by domain expert, not template generator.

Generate content now for:

Topic: {{insert_specific_topic}}

Data: {{insert_dataset_row}}Scaling Bulk Page Generation

For 1,000+ pages, you have three options:

Option A: API batch processing (technical, most efficient)

python

import openai

import pandas as pd

import time

# Load dataset

df = pd.read_csv('your_dataset.csv')

# Initialize API

openai.api_key = 'your_api_key'

results = []

for index, row in df.iterrows():

prompt = f"""

Generate unique content for:

Topic: Best {row['Category']} for {row['Industry']}

Data: {row.to_dict()}

[Include full prompt from above]

"""

response = openai.ChatCompletion.create(

model="gpt-4",

messages=[{"role": "user", "content": prompt}],

temperature=0.7 # Adds variability between pages

)

results.append({

'slug': row['slug'],

'content': response.choices[0].message.content

})

# Rate limiting

time.sleep(2) # Adjust based on API limits

if index % 100 == 0:

print(f"Processed {index} pages...")

# Save results

output_df = pd.DataFrame(results)

output_df.to_csv('generated_content.csv', index=False)Cost: ~$0.02-0.08 per page (varies by model and content length)

Time: 1,000 pages = 30-60 minutes

Quality: Highest control over consistency

Option B: Tools for bulk page generation (beginner-friendly)

- Bulk.ly: Upload CSV, map columns to prompts, generate at scale

- Bannerbear: Template-based generation with API integration

- Airtable + Make.com: Visual workflow (covered in next section)

Cost: $20-50/month for tools + API costs

Time: 1,000 pages = 2-4 hours setup + overnight processing

Quality: Good, less granular control

Option C: Hybrid approach (recommended for most)

- Generate 100 pages with API to establish patterns

- Review quality, refine prompts

- Scale to full dataset with refined prompts

- Spot-check 5-10% of output for quality control

According to ZeroSkillAI, the hybrid approach catches quality issues early while maintaining scale efficiency.

Level 4: The Assembly Line (Dataset to Website Automation)

You have a dataset and generated content. To complete your AI programmatic SEO workflows, you now need to publish 1,000 pages to your actual website. This is dataset to website automation—the pipeline that builds your AI programmatic SEO workflows.

The Complete Automation Pipeline

Tools for automated website build:

CMS Options:

WordPress (most flexible):

- Programmatic pages via custom post types

- Plugins: WP All Import (CSV to posts), Advanced Custom Fields (data structure)

- Best for: Full control, SEO plugins (Yoast, RankMath), extensive customization

Webflow (best design, no-code):

- CMS collections (structured data native)

- Bulk import via CSV

- Best for: Beautiful design, fast load times, less technical maintenance

Ghost (fastest, simplest):

- Markdown-native (perfect for generated content)

- API for bulk imports

- Best for: Speed-focused, minimal overhead

Framer (emerging favorite 2026):

- Component-based, modern design

- Easy programmatic page generation

- Best for: Developer-friendly no-code, modern aesthetics

The Make.com Workflow (Zero-Code Approach)

Step-by-step zero code SEO strategy pipeline:

Module 1: Data Source

- Tool: Airtable or Google Sheets

- Setup: Upload your dataset CSV

- Columns: Each column becomes a variable

Module 2: Content Generation

- Tool: OpenAI module in Make.com

- Setup: For each row, send prompt with row data to ChatGPT API

- Output: Save generated content back to new column in Airtable

Module 3: Slug Generation

- Tool: Make.com text formatter

- Setup: Create URL-friendly slug from title

- Example: “Best CRM for Real Estate” → “best-crm-for-real-estate”

Module 4: Website Publishing

- Tool: WordPress, Webflow, or Ghost API module

- Setup:

- Map Airtable columns to CMS fields

- Title → post title

- Content → post body

- Slug → URL slug

- Category → CMS category

- Action: Create new page/post

Module 5: Image Generation (optional)

- Tool: DALL-E or Midjourney module

- Setup: Generate featured image based on page topic

- Action: Attach to post as featured image

Module 6: Indexing Request

- Tool: Google Search Console API

- Setup: Send indexing request for new URL

- Action: Speeds up Google discovery (optional but helpful)

Workflow execution:

- Trigger: Manual run or scheduled (daily batch)

- Rate: 10-50 pages per run (avoid overwhelming CMS)

- Time: 1,000 pages = 10-20 batch runs over 1-2 weeks

Total automation cost:

- Make.com: $9-29/month (depending on operation volume)

- API calls: $20-80 for 1,000 pages

- CMS hosting: $10-30/month

Total time investment:

- Initial setup: 4-8 hours

- Per batch: 5 minutes monitoring

- Fully automated after setup

This is dataset to website automation at scale—what would take months manually happens in days with AI workflows.

Level 5: The Final Quality Checklist (Avoiding the Spam Penalty)

Google’s Helpful Content Update specifically targets low-quality programmatic content. Before launching your 1,000 pages, ensure you pass these checks:

Pre-Launch Quality Audit

☐ Value Addition Test (Critical)

- Question: Does each page provide unique value beyond just presenting database info?

- Check: Read 10 random pages—would a human find them useful?

- Pass criteria: Each page includes analysis, recommendations, or insights (not just data dumps)

- Fix if failing: Enhance prompts to include expert commentary, comparisons, decision frameworks

☐ Uniqueness Verification (Critical)

- Question: Are pages substantially different from each other?

- Check: Run 5 random pages through Copyscape or plagiarism checker

- Pass criteria: <30% similarity between pages, <10% similarity to existing web content

- Fix if failing: Increase temperature setting in AI generation, add more dynamic sections

☐ Technical SEO Health

- Page speed: All pages load in <2.5 seconds (use GTmetrix, PageSpeed Insights)

- Mobile responsive: Test on mobile devices (Google’s mobile-first indexing)

- Internal linking: Each page links to 3-5 related pages (creates site structure)

- Schema markup: Implement relevant structured data (Product, FAQPage, Article schemas)

- Sitemap: Generate XML sitemap with all programmatic pages, submit to Google Search Console

☐ Content Quality Signals

- Word count: 800-1,500 words per page minimum (avoid thin content)

- Readability: Flesch reading ease 60+ (conversational, not academic)

- Unique meta descriptions: Every page has distinct meta description (not template)

- Images: At least 1-2 unique images per page (improves engagement metrics)

- Updated dates: Include publish/update dates (signals freshness)

Post-Launch Monitoring

Week 1-2: Monitor Google Search Console for indexing issues

Week 3-4: Check for manual actions or ranking drops

Month 2-3: Analyze which page variations perform best, double down

Red flags to watch:

- Pages not indexing after 2 weeks (possible quality issues)

- High bounce rates (>80%) across programmatic pages (indicates poor UX)

- Manual action warnings in Search Console (immediate attention required)

If Google penalizes: Improve content quality on best-performing pages first, let them recover, then improve others. Don’t delete all pages at once—signals abandonment.

Frequently Asked Questions

Will Google penalize programmatic SEO in 2026?

Google doesn’t penalize programmatic SEO inherently—it penalizes low-quality programmatic content. There’s a crucial distinction.

What Google penalizes:

• Thin content (200-word pages with just data tables)

• Duplicate/templated content (identical paragraphs across pages with only names swapped)

• No unique value (pages that don’t answer search intent better than existing results)

• Spam signals (obviously AI-generated without human review, keyword stuffing)

What Google rewards:

• Unique, valuable content for each page variation

• Clear search intent satisfaction (user finds what they’re looking for)

• Good user experience (fast load, mobile-friendly, easy navigation)

• Expertise signals (depth of information, cited sources, updated data)

Proof it works: Nomad List, Zillow, Yelp, TripAdvisor, and thousands of successful sites use programmatic SEO. They succeed because each page provides genuine value for its specific query.

Best practice for AI programmatic SEO workflows in 2026:

• Start with 100-500 pages (test waters)

• Monitor Search Console and Analytics closely

• Improve quality iteratively based on performance data

• Scale to 1,000-10,000+ only after validating approach

Human review in AI programmatic SEO workflows is non-negotiable: Spot-check 5-10% of generated pages before publishing. Fix obvious AI errors, improve thin sections, verify accuracy. This quality control is what separates successful programmatic sites from penalized ones.

Do I need to know how to code to build automated website build systems?

No, but technical skills provide more control and cost efficiency.

Three paths to building AI programmatic SEO workflows:

Path A: The pure zero code SEO strategy (0% coding, highest ongoing cost)

• Tools: Airtable + Make.com + Webflow/WordPress

• Setup complexity: Low (visual workflows, drag-and-drop)

• Monthly cost: $50-100 (tool subscriptions)

• Scale limit: 10,000 pages (before costs become prohibitive)

• Best for: Beginners testing viability, solo founders prioritizing speed

Path B: Low-code (20% coding, moderate cost)

• Tools: Python scripts + no-code CMS

• Setup complexity: Medium (copy-paste code with minor modifications)

• Monthly cost: $20-50 (API + hosting only)

• Scale limit: 50,000+ pages

• Best for: Willing to learn basics, want cost efficiency at scale

Path C: Full-code (80% coding, lowest cost)

• Tools: Custom Next.js/Gatsby site + database

• Setup complexity: High (requires web development knowledge)

• Monthly cost: $10-30 (hosting only)

• Scale limit: Millions of pages

• Best for: Technical founders, agencies building for clients

Recommendation: Start with Path A (no-code) to validate concept. If you reach 500+ pages and $1,000+/month revenue, transition to Path B or C for better economics.

Learning curve reality: Path A takes 1-2 days to learn. Path B takes 1-2 weeks for Python basics. Path C takes 2-3 months to build from scratch. Choose based on your timeline and technical comfort.

How long does it take Google to index 1,000 programmatic pages?

Indexing speed varies dramatically based on site authority and technical implementation.

Typical timelines:

New site (Domain Authority <20):

• First 100 pages: 2-4 weeks

• Pages 100-500: 4-8 weeks

• Pages 500-1,000: 8-12 weeks

• Total: 3-4 months for full indexing

Established site (Domain Authority 40+):

• First 100 pages: 3-7 days

• Pages 100-500: 1-2 weeks

• Pages 500-1,000: 2-4 weeks

• Total: 4-6 weeks for full indexing

Factors that speed up indexing:

1. XML sitemap submission: Submit to Google Search Console immediately

2. Internal linking: Every new page links to 3-5 existing pages (helps Googlebot discover)

3. Indexing API: Request indexing for priority pages (limited to important pages)

4. Incremental publishing: Add 50-100 pages weekly (avoids “sudden spam” signals)

5. Social signals: Share new pages on social media (drives initial traffic)

Factors that slow down indexing:

1. Low crawl budget: New/small sites get crawled less frequently

2. Thin content: Google deprioritizes indexing if pages seem low-value

3. Technical issues: Slow load times, broken links, poor mobile experience

4. Orphan pages: Pages with no internal links get discovered slowly

Pro strategy for bulk page generation indexing:

• Publish 50-100 pages weekly for 10-20 weeks (not all at once)

• Monitor “Coverage” report in Google Search Console

• Prioritize indexing best-performing page types first

• Build backlinks to top-level category pages (trickles authority to child pages)

Reality check: Not all 1,000 pages will rank. Expect 20-30% to drive 80% of traffic. Focus on improving top performers rather than obsessing over indexing stragglers.

Conclusion: Scale Is the Moat

Here’s the fundamental truth about SEO in 2026: A site with 1,000 pages targeting 1,000 specific long-tail keywords captures more traffic than a site with 10 perfect articles targeting 10 generic keywords.

Why? Because long-tail searches represent 70% of all Google queries. When someone searches “best project management software for construction companies under 50 employees,” they don’t want a generic “best project management software” article. They want the specific answer.

AI programmatic SEO workflows capture that specificity at scale.

What you now have:

- Dataset sourcing strategies for finding 1,000+ row opportunities

- Template systems for dynamic page generation

- AI programmatic SEO workflows for unique content creation

- Dataset to website automation pipelines (no-code and technical)

- Quality checklists to avoid Google penalties

- Complete understanding of indexing and scaling strategies

What you can do with this:

- Launch niche directory sites in your expertise area

- Build comparison platforms for software, services, or products

- Create location-based service pages for local SEO

- Generate educational content for every variation of a topic

- Monetize traffic with AI digital product workflows, affiliate links, or ads

The economics:

Traditional blogging:

- 1 article = 4-8 hours = 100-500 visitors/month

- 100 articles = 800 hours = 10,000-50,000 visitors/month

AI programmatic SEO workflows:

- 1,000 pages via an automated website build = 40 hours setup + automation = 50,000-200,000 visitors/month

- Ongoing: 2-4 hours/month maintenance

The ROI is asymmetric. Initial effort is higher, but compound returns dwarf traditional methods.

The path forward:

Week 1: Find your golden dataset (500-1,000 rows)

Week 2: Build template + generate first 100 pages

Week 3: Publish first batch, monitor indexing

Week 4: Refine based on data, scale to full dataset

Most people overcomplicate this. Start simple:

- Pick a dataset you understand (your industry, your hobby, your expertise)

- Create 100 pages first (validates concept before massive investment)

- Wait 4-6 weeks for indexing and traffic data

- Double down on what works, pivot away from what doesn’t

The programmatic SEO revolution is here. Sites launched in 2024-2025 using these exact methods now rank for 10,000+ keywords and generate six-figure annual revenue. The barrier to entry keeps dropping while opportunity expands.

Ready to build your 1,000-page empire?

Pick your dataset. Build your template. Generate your content. Publish before it’s perfect.

Follow ZeroSkillAI.com for more automation frameworks, copy-paste configurations, and zero-skill tools that create unfair advantages. We’re democratizing web publishing—no development degree required.

Google rewards depth and breadth. Your 1,000-page site beats their 10-page blog. Start building today.

Pingback: The 7 Best Plagiarism Checkers for AI Content Creators (2026 Review) -